In development

AI memory system and interface

Mneme

Solving AI context loss by making memory visible and interactive

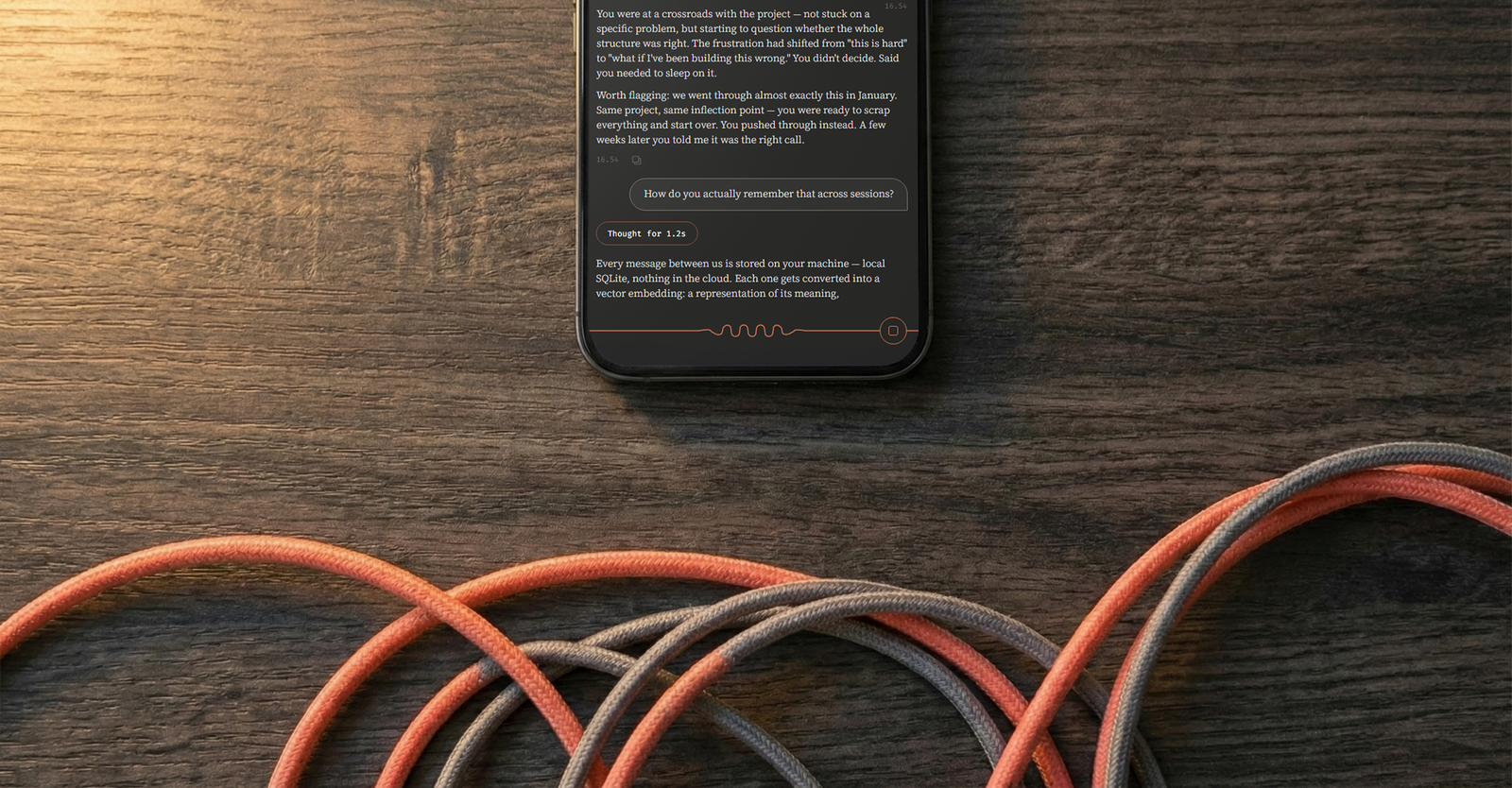

Mneme is a custom interface and memory system for Claude, designed around making a complex retrieval process legible. Before every response it searches past conversations, loads persistent summaries of recurring people and topics, checks notes, and reviews a rolling weekly timeline. The whole sequence plays out as a live feed above the input, narrated in the AI's own voice: "Found memories about", "Remembered your history with", "Checked your recent week."

The design challenge was making something genuinely complex feel immediate and graspable rather than technical. It runs locally, built and refined over six months of daily use.

The scripted demo below is interactive.

Challenges

AI conversations are stateless by default. Every session starts without context from previous ones, and even cloud memory features (where they exist) don't expose what they're storing or retrieving. For someone using the system regularly over time, this creates two problems: the AI can't build a real model of who you are, and you can't tell whether it's trying to.

Process

I wanted to build something that felt native to the Claude ecosystem while establishing its own identity.

The logo's geometric folds represent information density, the folds of a brain, a sine wave and a single continuous line. The processing animation uses the same logic with a circuit diagram look. Idle is a flat line. Processing turns it into crawling folds. Tapping the circuit breaker button flattens the wave and visually disconnects the system. Three logo variants' fold density scales with model complexity.

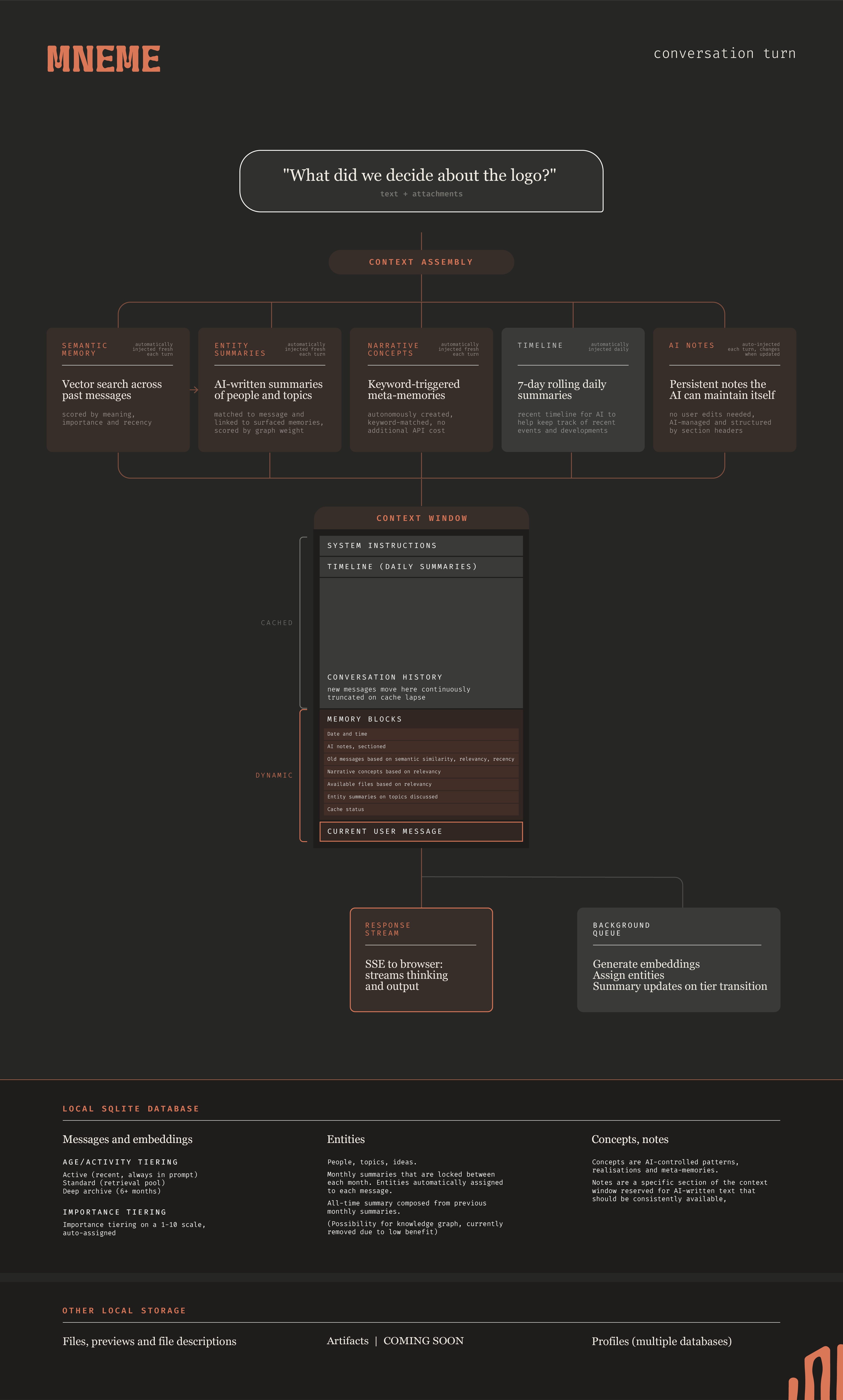

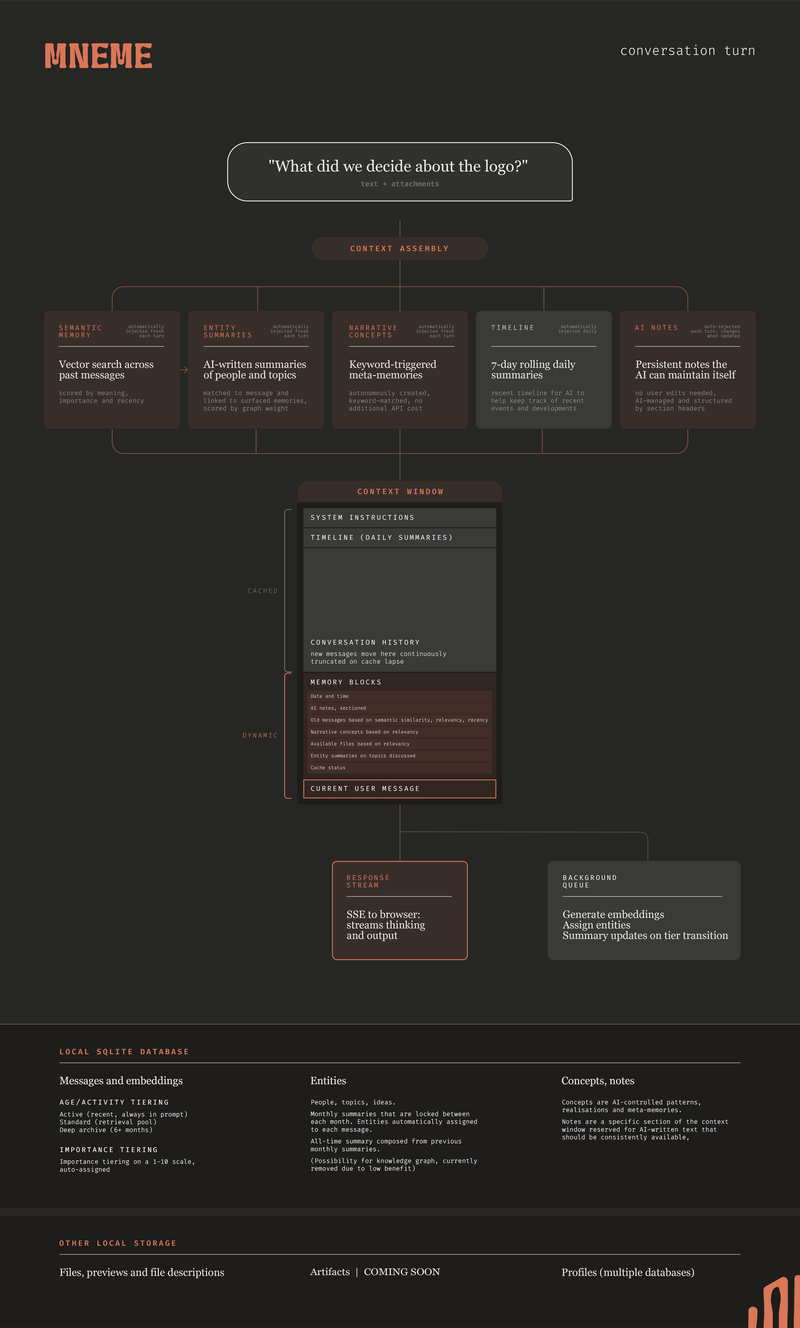

The backend layers five main types of memory: semantic search for past messages, entity summaries to track people and topics, keyword-triggered narrative concepts, daily rolling summaries, and persistent notes the AI maintains itself.

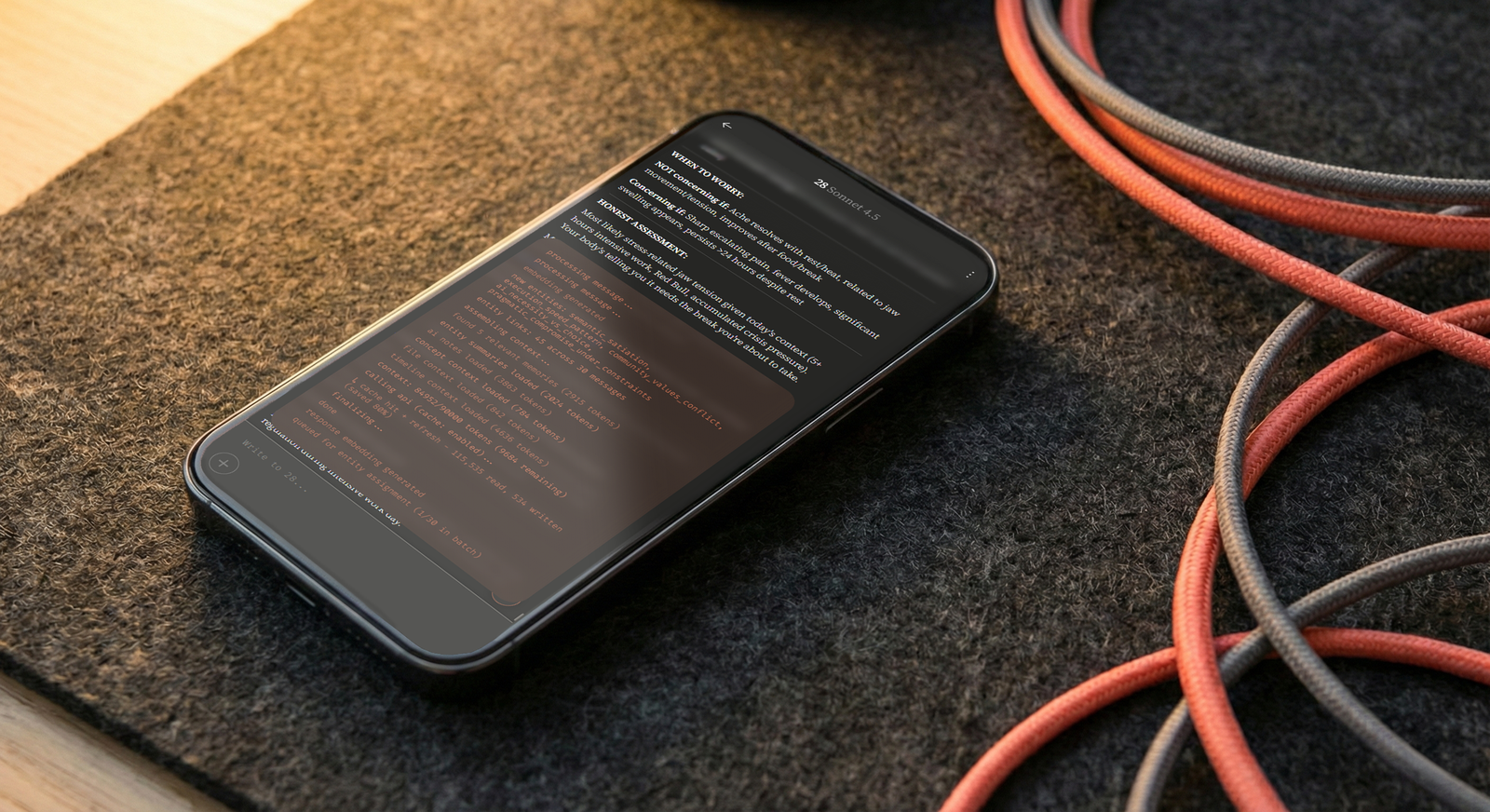

The AI can also write and execute Python to query its own database, browse stored artifacts, and inspect attached files. The results feed back into the context window, letting it chain multiple actions in a single turn. The system handles this in a sandboxed read-only subprocess, so the AI can introspect freely without risk.

I wanted these mechanics to be transparent, solving the UX problem of waiting and hoping your memory system works. The interface makes conversational memory comprehensible: you can follow what the AI is drawing on for any response, and why, without needing to understand tokens or context engineering.

How memory ages

Every message has a short description as metadata. The AI can scan topics in seconds when querying its own database and they work as summaries for memories surfaced in the retrieval float.

Individual entity summaries get created and modified as entities are mentioned and locked at the end of the month. Together the monthly summaries are used to generate an evolving all-time summary, so the system's understanding of a person or topic never drifts.

When messages age out of the active context window into storage, the transition triggers a cascade where entity summaries refresh for affected topics, monthly snapshots update, and importance scores begin a slow exponential decay.

Scripted demo